A history of machine translation from the Cold War to deep learning

Table of Contents

I open Google Translate twice as often as Facebook, and the instant translation of the price tags is not a cyberpunk for me anymore. That’s what we call reality. It’s hard to imagine that this is the result of a centennial fight to build the algorithms of machine translation and that there has been no visible success during half of that period.

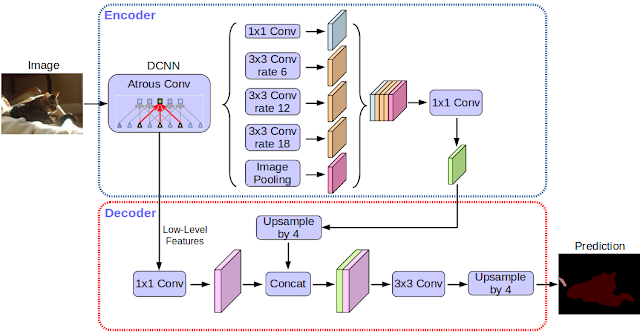

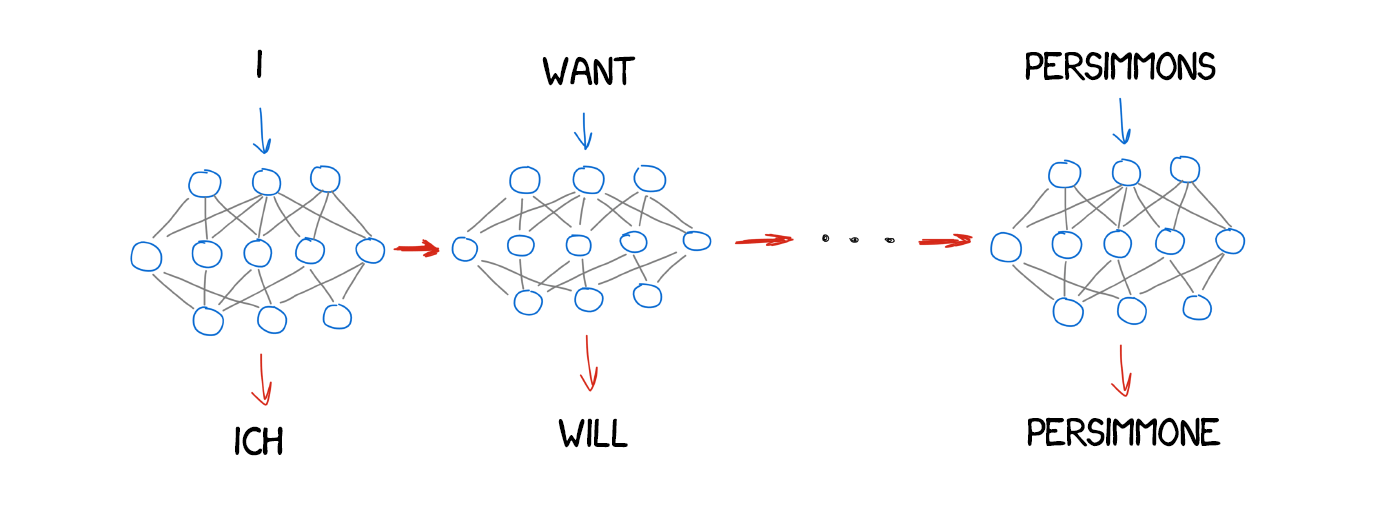

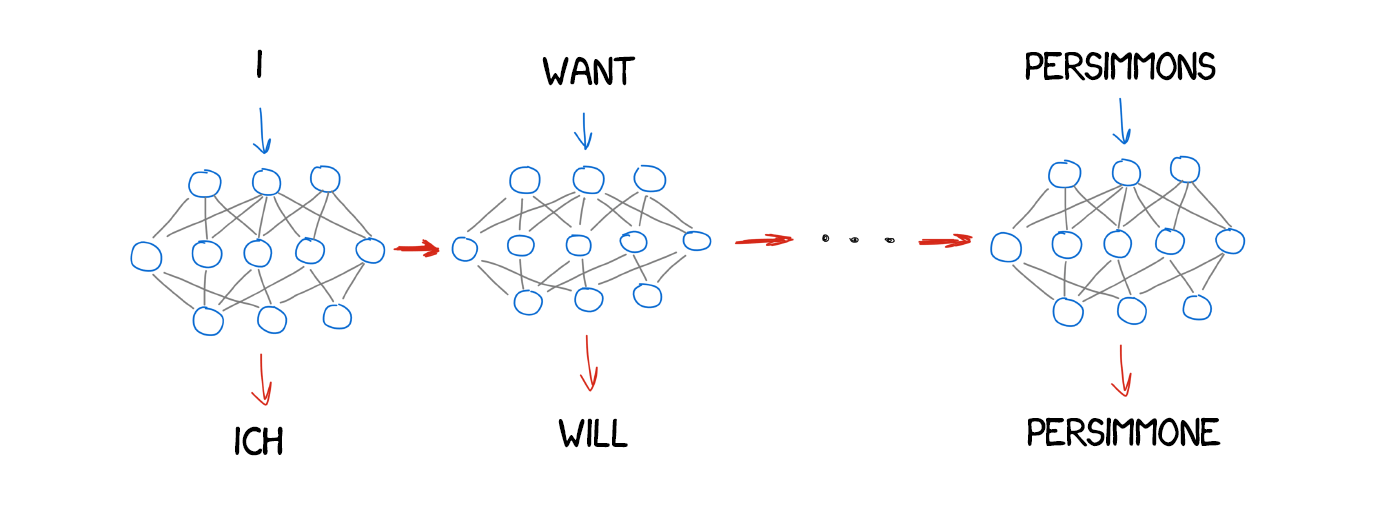

The precise developments I’ll discuss in this article set the basis of all modern language processing systems—from search engines to voice-controlled microwaves. I’m talking about the evolution and structure of online translation today.

Source: freecodecamp.org