Behind the Motion Photos Technology in Pixel 2

Table of Contents

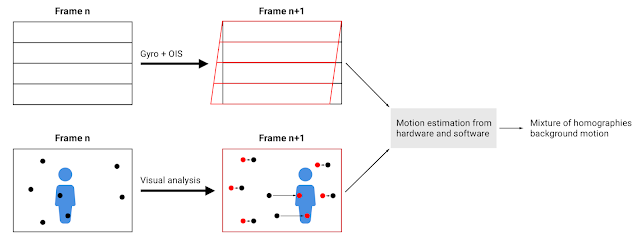

Background motion estimation in motion photos. By using the motion metadata from Gyro and OIS we are able to accurately classify features from the visual analysis into foreground and background.

Source: googleblog.com