An Introduction to Hashing in the Era of Machine Learning

Table of Contents

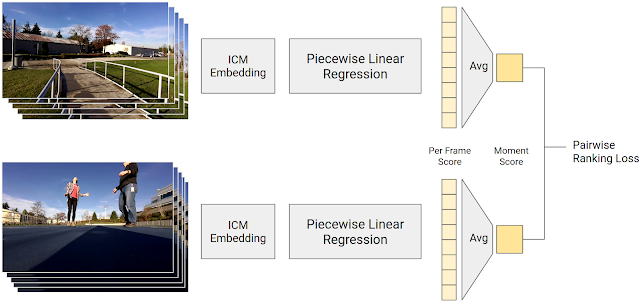

In December 2017, researchers at Google and MIT published a provocative research paper about their efforts into “learned index structures”. The research is quite exciting, as the authors state in the abstract: Indeed the results presented by the team of Google and MIT researchers includes findings that could signal new competition for the most venerable stalwarts in the world of indexing: the B-Tree and the Hash Map. The engineering community is ever abuzz about the future of machine learning; as such the research paper has made its rounds on Hacker News, Reddit, and through the halls of engineering communities worldwide.

In response to the findings of the Google/MIT collaboration, Peter Bailis and a team of Stanford researchers went back to the basics and warned us not to throw out our algorithms book just yet. Bailis’ and his team at Stanford recreated the learned index strategy, and were able to achieve similar results without any machine learning by using a classic hash table strategy called Cuckoo Hashing. So what’s all the fuss about?

Are hash maps and B-Trees destined to become aging hall-of-famers? Are machines about to rewrite the algorithms textbook? What would it really mean for the computing world if machine learning strategies really are better than the general purpose indexes we know and love?

Under what conditions will the learned indexes outperform the old standbys?

Source: bradfieldcs.com