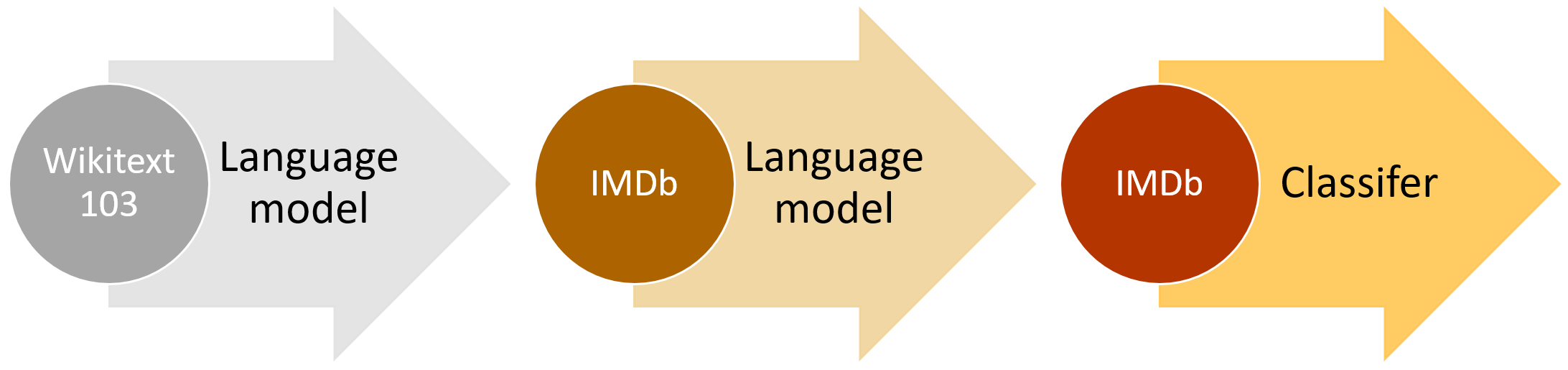

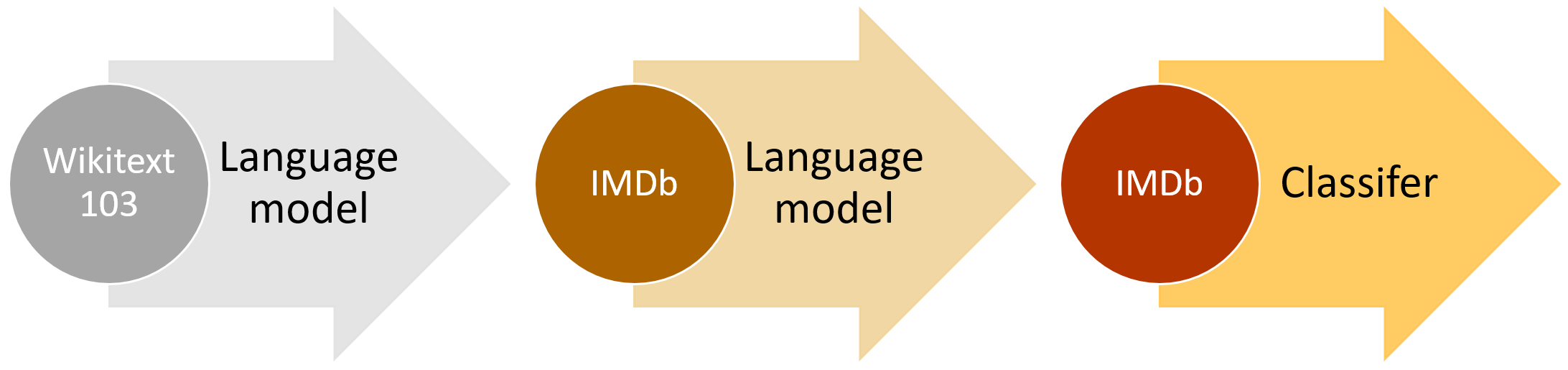

Introducing state of the art text classification with universal language models

Table of Contents

This post is a lay-person’s introduction to our new paper, which shows how to classify documents automatically with both higher accuracy and less data requirements than previous approaches. We’ll explain in simple terms: natural language processing; text classification; transfer learning; language modeling; and how our approach brings these ideas together. If you’re already familar with NLP and deep learning, you’ll probably want to jump over to our NLP classification page for technical links.

Today we’re releasing our paper Universal Language Model Fine-tuning for Text Classification (ULMFiT), pre-trained models, and full source code in the Python programming language. The paper has been peer-reviewed and accepted for presentation at the Annual Meeting of the Association for Computational Linguistics (ACL 2018). For links to videos providing an in-depth walk-through of the approach, all the Python modules used, pre-trained models, and scripts for building your own models, see our NLP classification page.

This method dramatically improves over previous approaches to text classification, and the code and pre-trained models allow anyone to leverage this new approach to better solve problems such as: Finding documents relevant to a legal case; Identifying spam, bots, and offensive comments; Classifying positive and negative reviews of a product; Grouping articles by political orientation; …and much more.

Source: fast.ai