Tensor Compilers: Comparing PlaidML, Tensor Comprehensions, and TVM

Table of Contents

One of the most complex and performance critical parts of any machine learning framework is its support for device specific acceleration. Indeed, without efficient GPU acceleration, much of modern ML research and deployment would not be possible. This acceleration support is also a critical bottleneck, both in terms of adding support for a wider range of hardware targets (including mobile) as well as for writing new research kernels.

Much of NVIDIA’s dominance in machine learning can be attributed to its greater level of software support, largely in the form of the cuDNN acceleration library. We wrote PlaidML to overcome this bottleneck.

PlaidML is capable of automatically generating efficient GPU acceleration kernels for a wide range of hardware for both existing machine learning operations and new research kernels. Because writing a kernel is a complex process, GPU kernels have typically been written by hand.

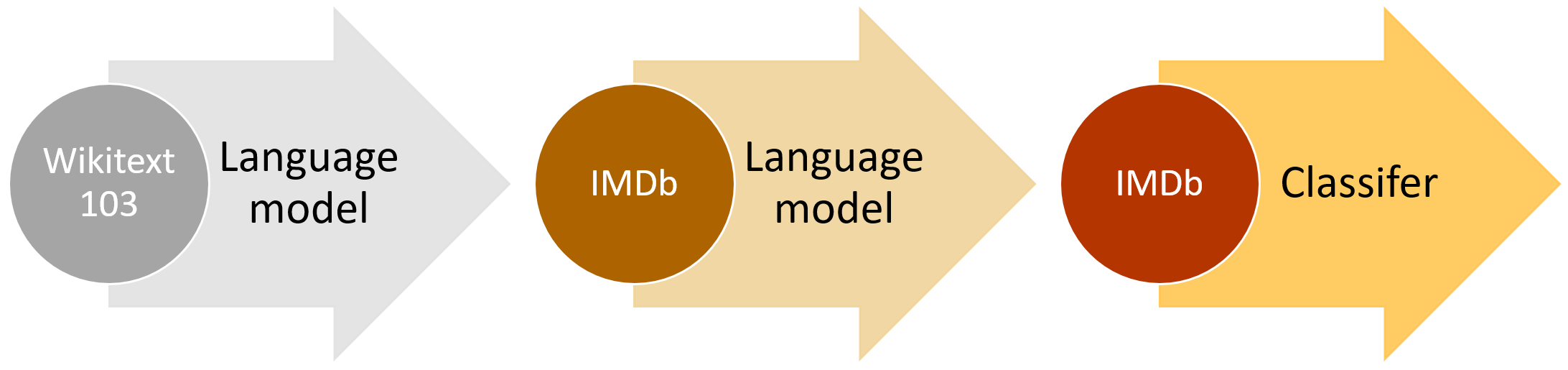

Along with PlaidML, two additional projects, Tensor Comprehensions and TVM, are attempting to change this paradigm. Tensor Comprehensions makes the point about the importance of these technologies in their very well written announcement.

Source: vertex.ai