Using AI and satellite imagery for disaster insights

Table of Contents

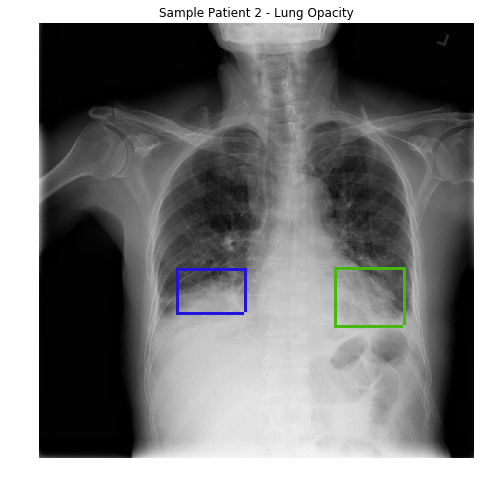

A framework for using convolutional neural networks (CNNs) on satellite imagery to identify the areas most severely affected by a disaster. This new method has the potential to produce more accurate information in far less time than current manual methods. Ultimately, the goal of this research is to allow rescue workers to quickly identify where aid is needed most, without relying on manually annotated, disaster-specific data sets.

Researchers train models on CNNs to detect human-made features, such as roads. Existing approaches for disaster impact analysis require data sets that can be expensive to produce because they require time-consuming manual annotation (for instance, annotating buildings damaged by fire as a new class) to train on. This new method only uses general road and building data sets.

These are readily available and can be scalable to other, similar natural disasters. Since differences due to changing seasons, time of day, or other factors can cause erroneous results, the models are first trained to detect these high-level features (i.e., roads). The models then generate prediction masks in regions experiencing a disaster.

By computing the relative change between features extracted from snapshots of data captured both before and after a disaster, it’s possible to identify the areas of maximum change. The researchers also propose a new metric to help quantify the detected changes — the Disaster Impact Index (DII), normalized for different types of features and disasters. When using data sets from the Hurricane Harvey flooding and the Tubbs Fire in Santa Rosa, CA, there was a strong correlation between the model’s calculated DII and the actual areas affected.

Source: fb.com