Rate Limiting at the Edge

Table of Contents

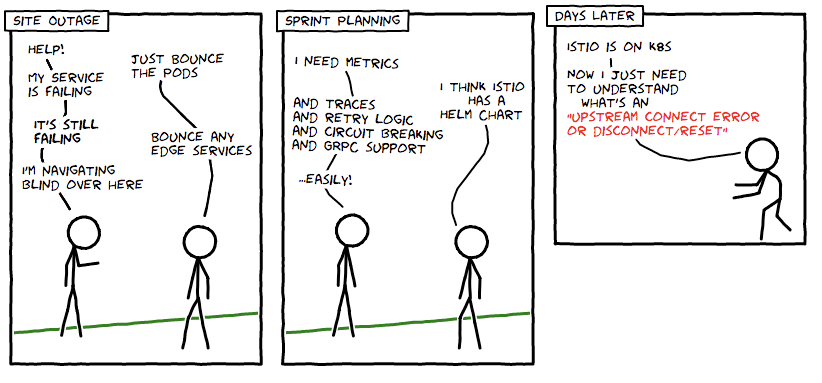

I’m sure many of you have heard of the “Death Star Security” model—the hardening of the perimeter, without much attention paid to the inner core—and while this is generally considered bad form in the current cloud native landscape, there is still many things that do need to be implemented at edge in order to provide both operational and business logic support. One of these things is rate limiting. Modern applications and APIs can experience a burst of traffic over a short time period, for both good and bad reasons, but this needs to be managed well if your business model relies upon the successful completion of requests by paying customers.

Using rate limiting allows the prioritisation of requests. Modern cloud native applications typically rely on third-party external services to provide end-user functionality, for example, a payment provider or storage services. If these services experience issues (or your own internal dependencies experience issues) techniques like load shedding can reduce pressure on upstream services, or prevent a simple service degradation becoming a full-scale cascading outage.

Source: getambassador.io