Server Name Indication (SNI) Support Now in Ambassador

Table of Contents

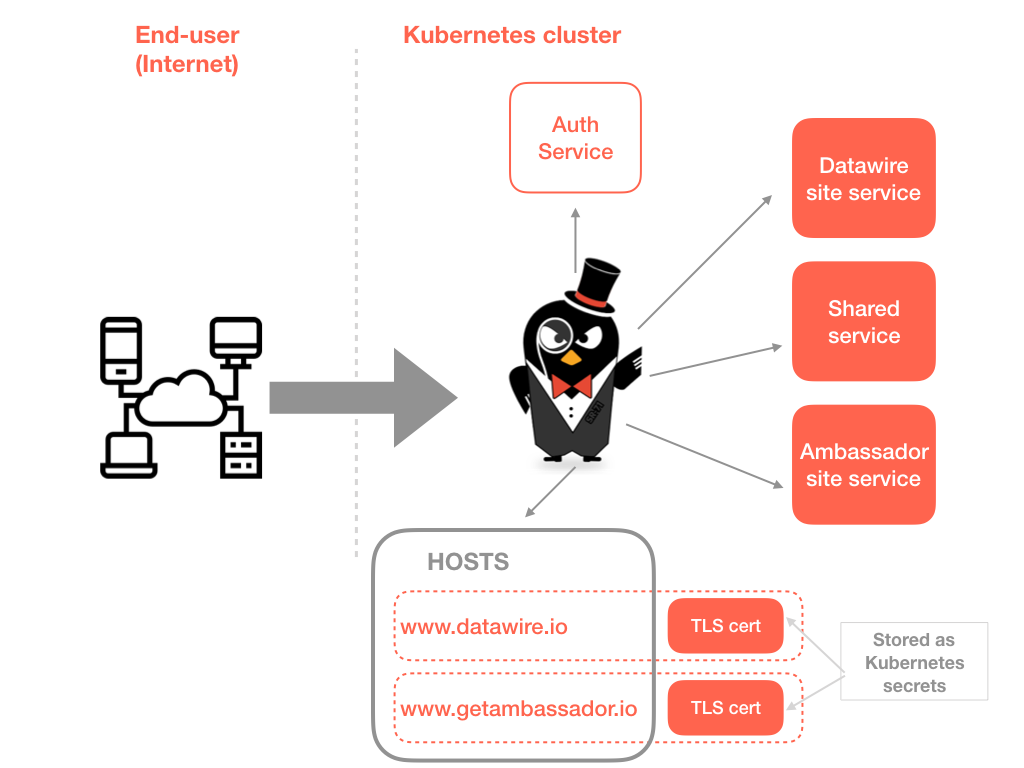

We’ve discussed many interesting use cases for SNI support within the edge proxy/gateway with both open source and commercially supported users of Ambassador. In a nutshell (and with thanks to Wikipedia), SNI is an extension to the TLS protocol which allows a client to indicate which hostname it is attempting to connect to at the start of the TCP handshaking process. This allows the server to present multiple certificates on the same IP address and TCP port number, which in turn enables the serving of multiple secure websites or API services without requiring all those sites to use the same certificate.

For those of you who have configured edge proxies and API gateways in the past, SNI is the conceptual equivalent to HTTP/1.1 name-based virtual hosting, but for HTTPS. Many people are running Kubernetes clusters that offer multiple backend services to end-users, and frequently they want to serve secure traffic while presenting multiple hostnames as, for example, this allows the easy differentiation of services (e.g. www.datawire.io and api.dw.io) on offer, and supports the exposure of multiple in-house (web addressable) brands that share backend services from a single cluster (e.g. www.fashion-brand-one.com and www.fashion-brand-two.com). The Ambassador SNI documentation provides a step-by-step guide to configuration, but I’ve also provided a summary here.

Source: getambassador.io