Hash Your Way To a Better Neural Network

Table of Contents

The computer industry has been busy in recent years trying to figure out how to speed up the calculations needed for artificial neural networks—either for their training or for what’s known as inference, when the network is performing its function. In particular, much effort has gone into designing special-purpose hardware to run such computations. Google, for example, developed its Tensor Processing Unit, or TPU, first described publicly in 2016.

More recently, Nvidia introduced its V100 Graphics Processing Unit, which the company says was designed for AI training and inference, along with other high-performance computing needs. And startups focused on other kinds of hardware accelerators for neural networks abound. Perhaps they’re all making a giant mistake.

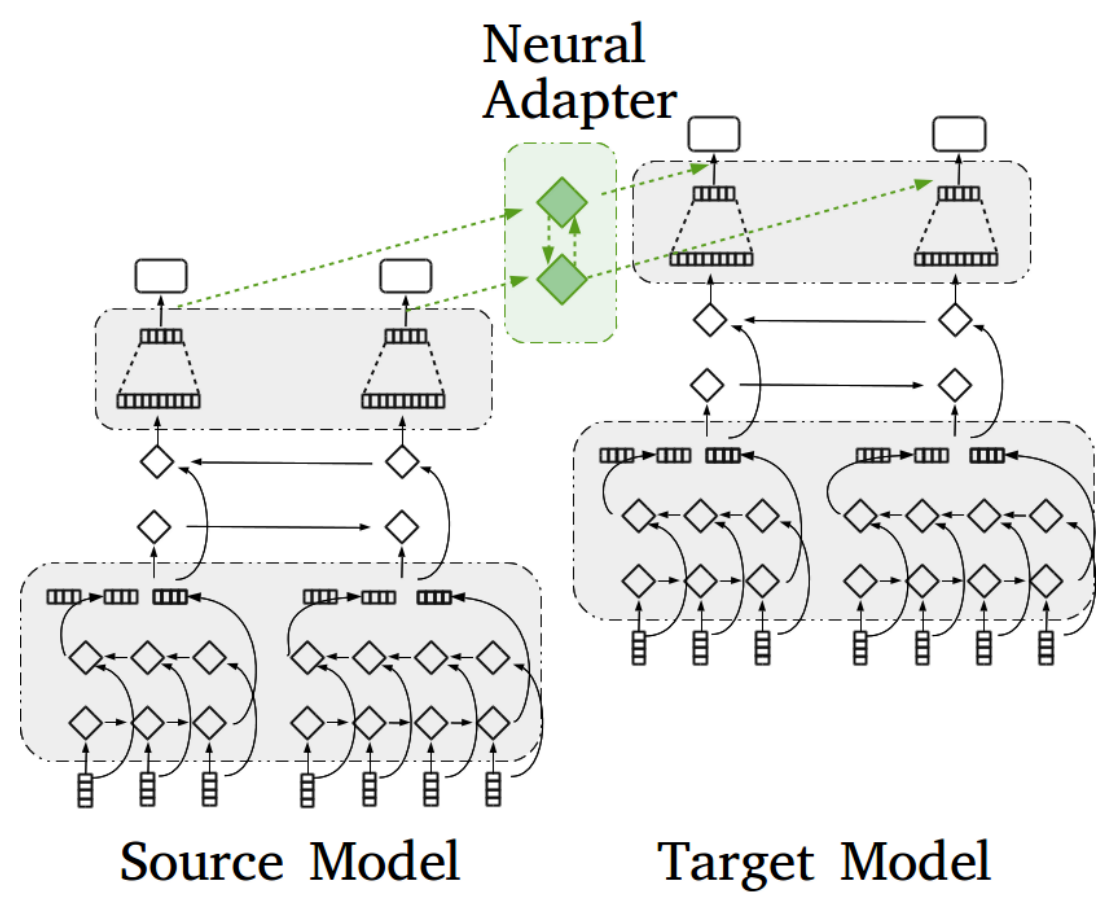

That’s the message, anyway, in a paper posted last week on the arXiv pre-print server. In it, the authors, Beidi Chen, Tharun Medini, and Anshumali Shrivastava of Rice University, argue that the specialized hardware that’s been developed for neural networks may be optimized for the wrong algorithm. You see, operating these networks typically hinges on how fast the hardware can perform matrix multiplications, which are used to determine the output of each artificial neuron—its “activation”—for a given set of input values.

This involves matrix multiplication because each of the values input to a given neuron gets multiplied by a corresponding weight parameter before being summed together—this multiplying and summing being the basic manipulation in matrix multiplication.

Source: ieee.org