Releasing Pythia for vision and language multimodal AI models

Table of Contents

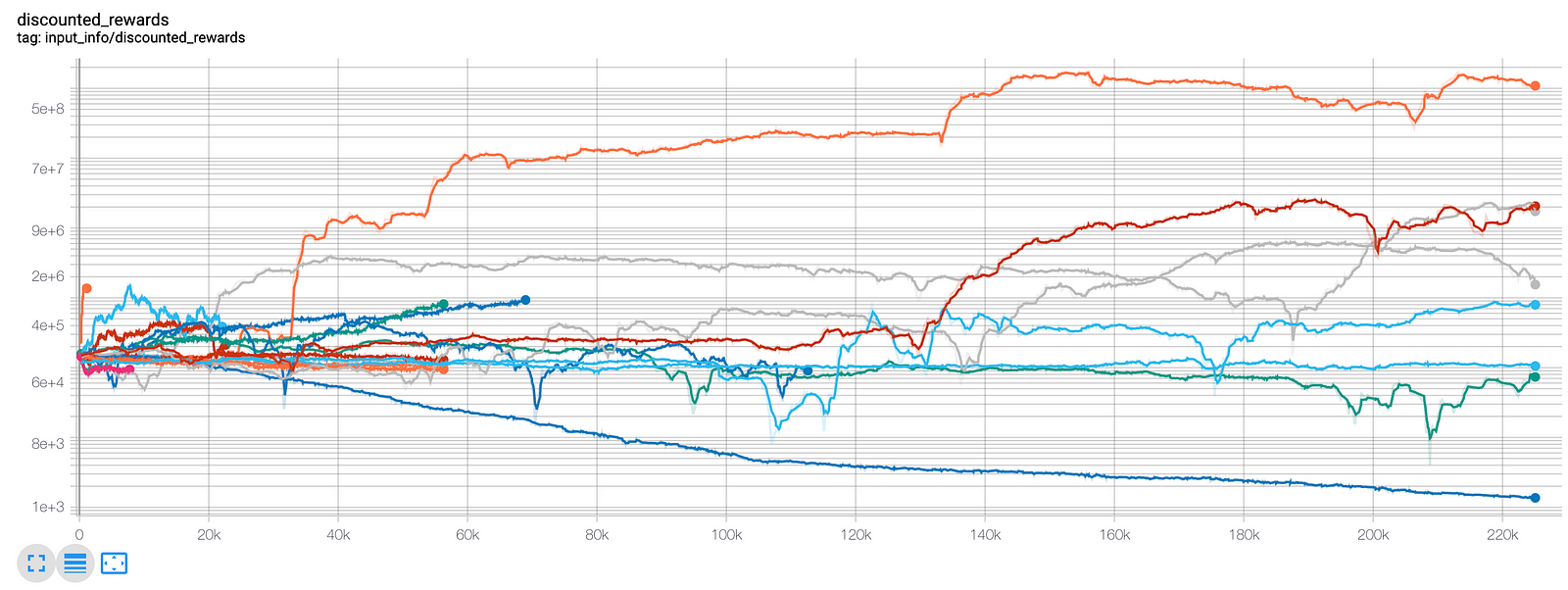

Pythia is a deep learning framework that supports multitasking in the vision and language domain. Built on our open-source PyTorch framework, the modular, plug-and-play design enables researchers to quickly build, reproduce, and benchmark AI models. Pythia is designed for vision and language tasks, such as answering questions related to visual data and automatically generating image captions.

Pythia incorporates elements of our winning entries in recent AI competitions (the VQA Challenge 2018 and Vizwiz Challenge 2018). Features include reference implementations to show how previous state-of-the-art models achieved related benchmark results and to quickly gauge the performance of new models. In addition to multitasking, Pythia also supports distributed training and a variety of datasets, as well as custom losses, metrics, scheduling, and optimizers.

Pythia smooths the process of entering the growing subfield of vision and language and frees researchers to focus on faster prototyping and experimentation. Our goal is to accelerate progress by increasing the reproducibility of these models and results. This will make it easier for the community to build on, and benchmark against, successful systems.

We hope that removing some of the obstacles will allow researchers to more quickly develop new ways for people and intelligent machines to communicate. This work should also help researchers develop adaptive AI that synthesizes multiple kinds of understanding into a more context-based, multimodal understanding. In addition to this open source release, we plan to continue adding tools, tasks, data sets, and reference models.

Source: fb.com