First Programmable Memristor Computer

Table of Contents

Michigan team builds memristors atop standard CMOS logic to demo a system that can do a variety of edge computing AI tasks Hoping to speed AI and neuromorphic computing and cut down on power consumption, startups, scientists, and established chip companies have all been looking to do more computing in memory rather than in a processor’s computing core. Memristors and other nonvolatile memory seem to lend themselves to the task particularly well. However, most demonstrations of in-memory computing have been in standalone accelerator chips that either are built for a particular type of AI problem or that need the off-chip resources of a separate processor in order to operate.

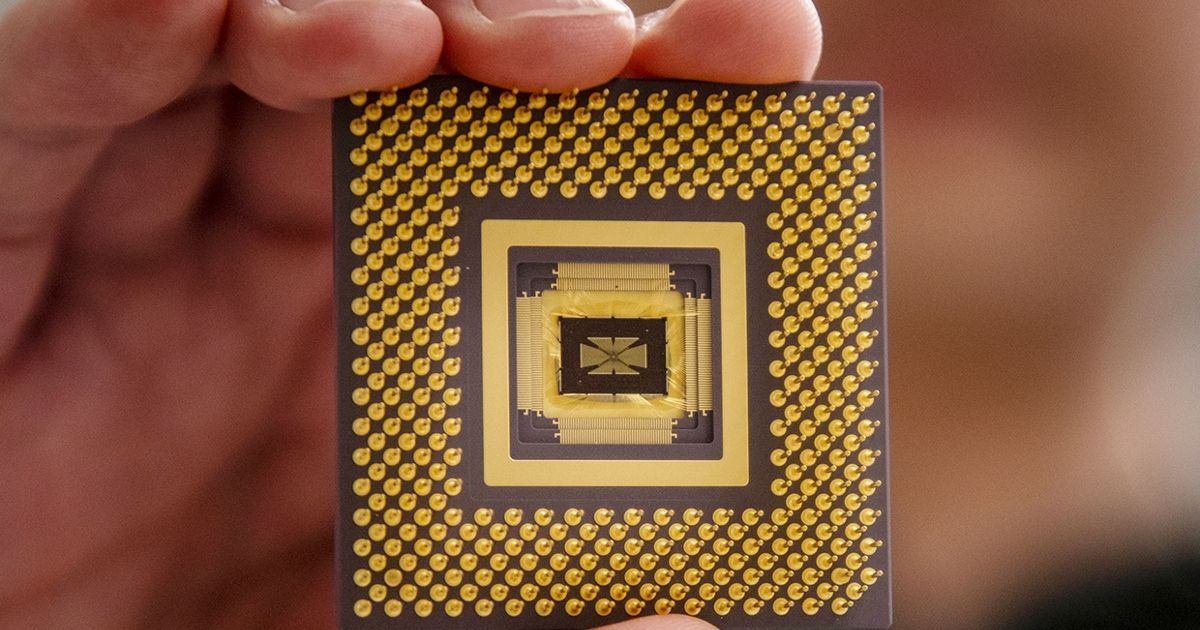

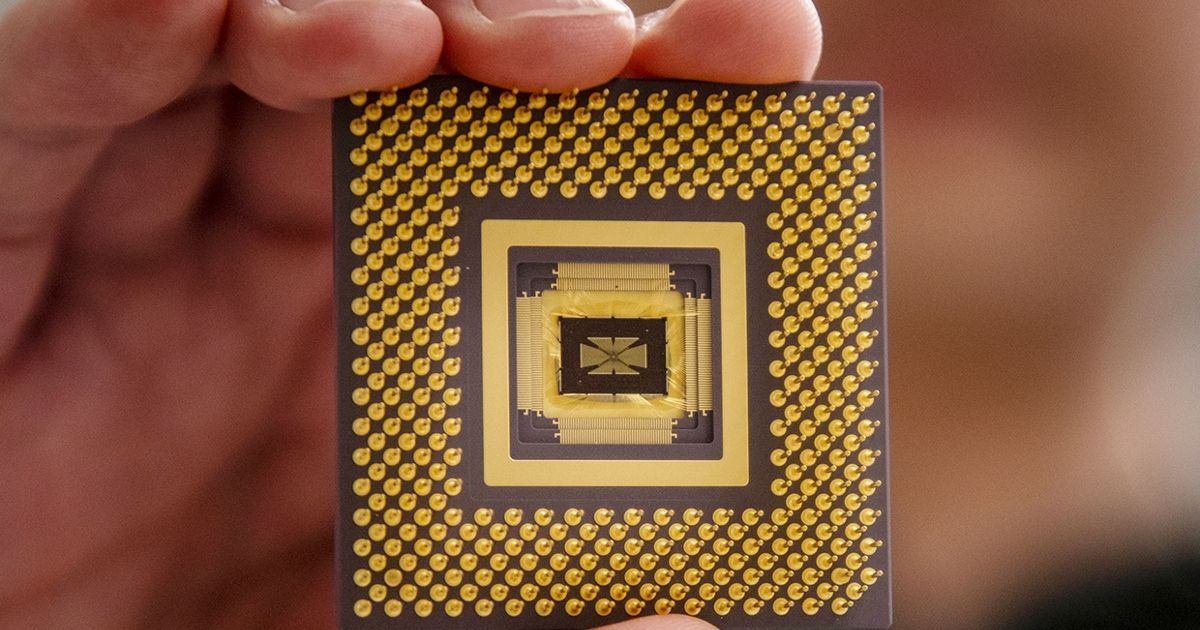

University of Michigan engineers are claiming the first memristor-based programmable computerforAI that can work on all its own. His lab has been working with memristors (also called resistive RAM, or RRAM), which store data as resistance, for more than a decade and has demonstrated the mechanics of theirpotential to efficiently perform AI computations such as the multiply-and-accumulate operations at the heart of deep learning. Arrays of memristors can do these tasks efficiently because they become analog computations instead of digital.

The new chip combines an array of 5,832 memristors with an OpenRISC processor. 486 specially-designed digital-to-analog converters, 162 analog-to-digital converters, and two mixed-signal interfaces act as translators between the memristors’ analog computations and the main processor.

Source: ieee.org