Reimagining Experimentation Analysis at Netflix

Table of Contents

Another day, another custom script to analyze an A/B test. Maybe you’ve done this before and have an old script lying around. If it’s new, it’s probably going to take some time to set up, right?

Not at Netflix. Suppose you’re running a new video encoding test and theorize that the two new encodes should reduce play delay, a metric describing how long it takes for a video to play after you press the start button. You can look at ABlaze (our centralized A/B testing platform) and take a quick look at how it’s performing.

You notice that the first new encode (Cell 2 — Encode 1) increased the mean of the play delay but decreased the median! After recreating the dataset, you can plot the raw numbers and perform custom analyses to understand the distribution of the data across test cells. With our new platform for experimentation analysis, it’s easy for scientists to perfectly recreate analyses on their laptops in a notebook.

They can then choose from a library of statistics and visualizations or contribute their own to get a deeper understanding of the metrics. Netflix runs on an A/B testing culture: nearly every decision we make about our product and business is guided by member behavior observed in test. At any point a Netflix user is in many different A/B tests orchestrated through ABlaze.

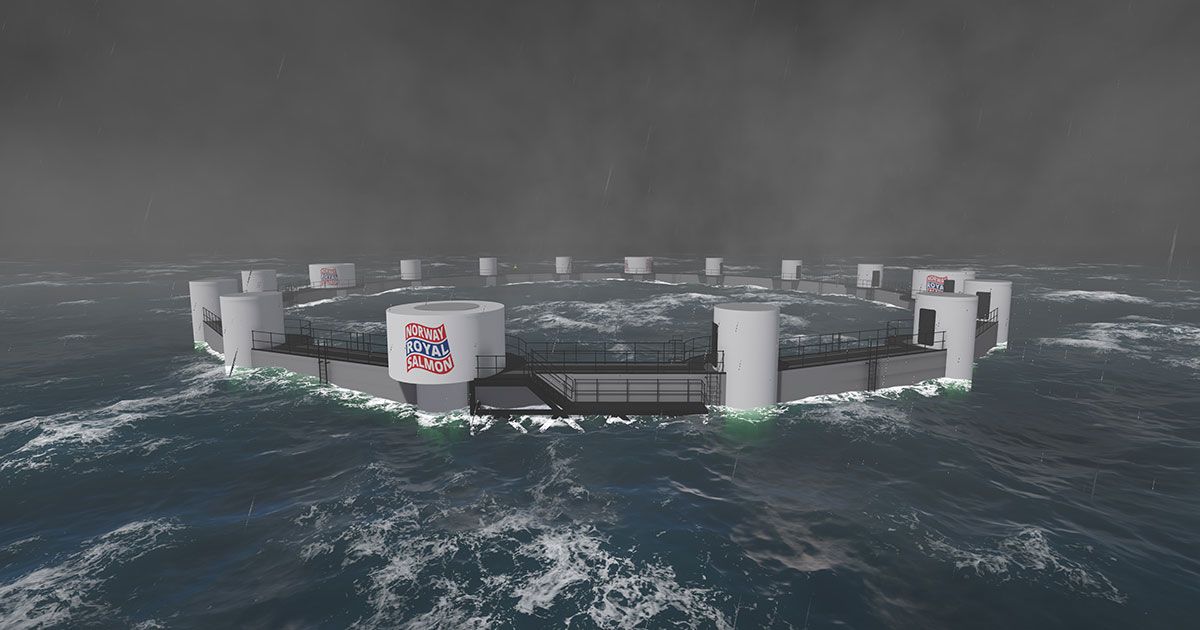

This enables us to optimize their experience at speed. Our A/B tests range across UI, algorithms, messaging, marketing, operations, and infrastructure changes. A user might be in a title artwork test, personalization algorithm test, or a video encoding testing, or all three at the same time.

Source: medium.com