Google’s medical AI was super accurate in a lab. Real life was a different story.

Table of Contents

If AI is really going to make a difference to patients we need to know how it works when real humans get their hands on it, in real situations. The covid-19 pandemic is stretching hospital resources to the breaking point in many countries in the world. It is no surprise that many people hope AI could speed up patient screening and ease the strain on clinical staff.

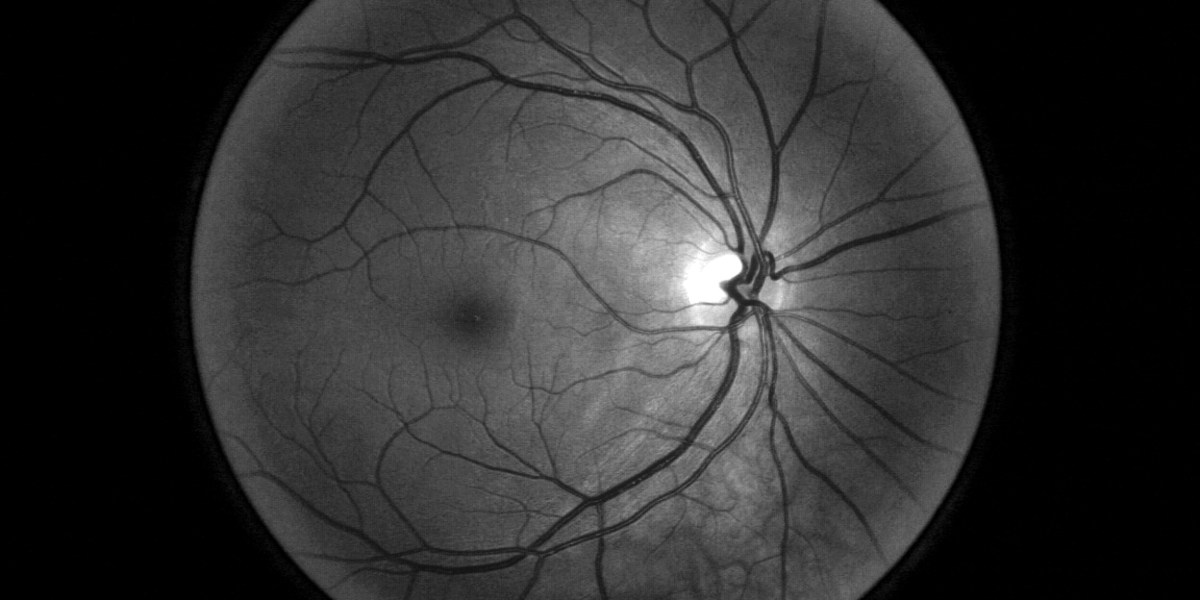

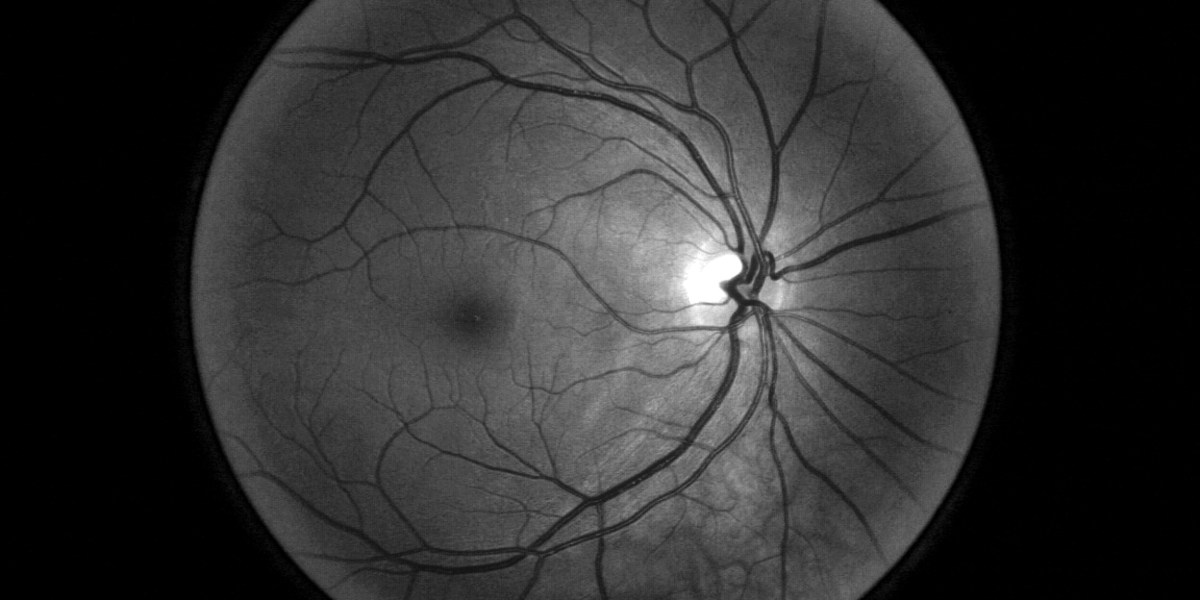

But a study from Google Health—the first to look at the impact of a deep-learning tool in real clinical settings—reveals that even the most accurate AIs can actually make things worse if not tailored to the clinical environments in which they will work. Google’s first opportunity to test the tool in a real setting came from Thailand. The country’s ministry of health has set an annual goal to screen 60% of people with diabetes for diabetic retinopathy, which can cause blindness if not caught early.

But with around 4.5 million patients to only 200 retinal specialists—roughly double the ratio in the US—clinics are struggling to meet the target. Google has CE mark clearance, which covers Thailand, but it is still waiting for FDA approval. So to see if AI could help, Beede and her colleagues outfitted 11 clinics across the country with a deep-learning system trained to spot signs of eye disease in patients with diabetes.

Source: technologyreview.com