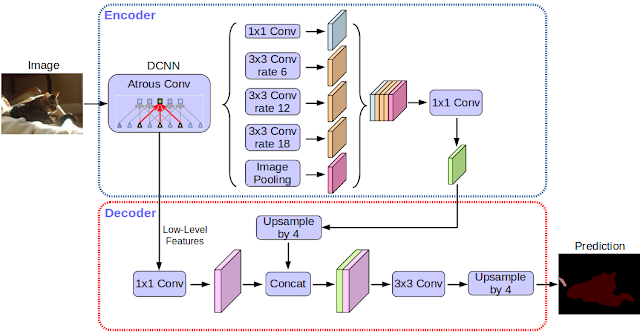

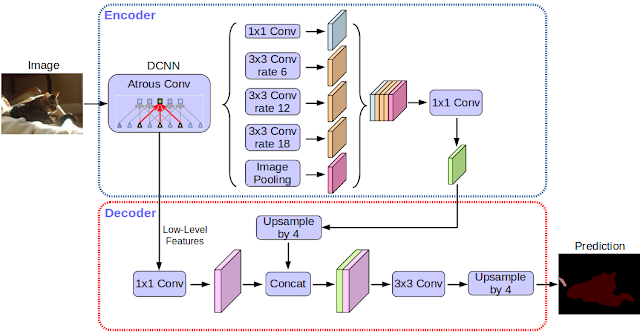

Today, we are excited to announce the open source release of our latest and best performing semantic image segmentation model, DeepLab-v3+ [1], implemented in Tensorflow. This release includes DeepLab-v3+ models built on top of a powerful convolutional neural network (CNN) backbone architecture [2, 3] for the most accurate results, intended for server-side deployment. As part of this release, we are additionally sharing our Tensorflow model training and evaluation code, as well as models already pre-trained on the Pascal VOC 2012 and Cityscapes benchmark semantic segmentation tasks.

Read More