How to perform a CNI Live Migration from Flannel+Calico to Cilium

Table of Contents

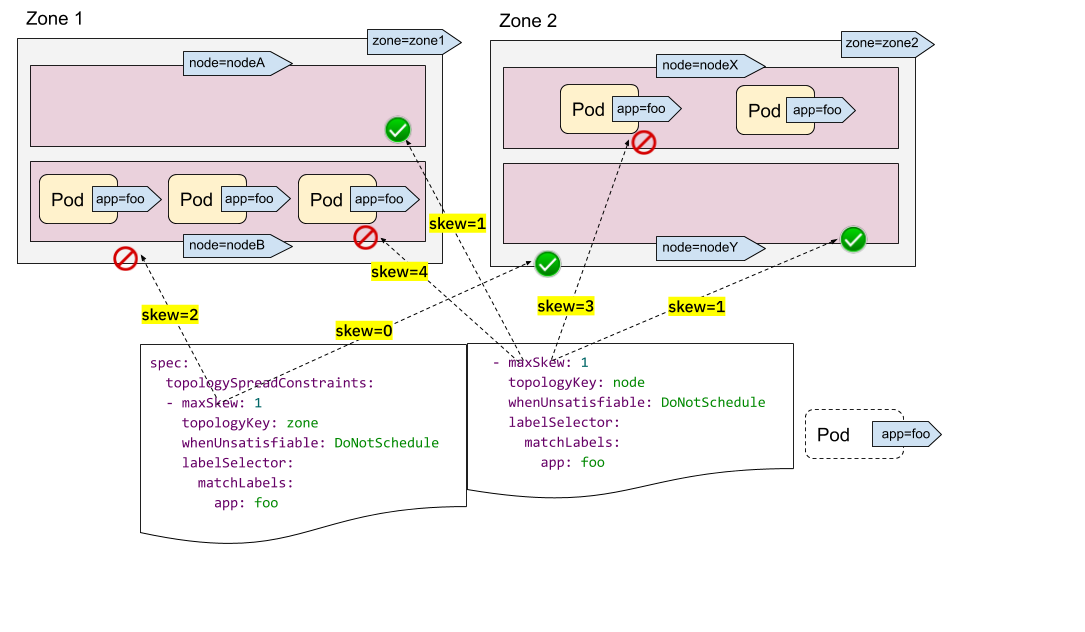

Container Network Interface (CNI) is a big topic, but in short, CNI is a set of specifications that define an interface used by container orchestrators to set up networking between containers. In the Kubernetes space, the Kubelet is responsible for calling the CNI installed on the cluster so Pods are attached to the Kubernetes cluster network during creation, and its resources are properly released during deletion. CNIs can also be responsible for more advanced features than just setting up routes in the cluster, such as network policy enforcement, encryption, load balancing, etc.

There are many implementations of CNI for various use cases, each having their own advantages and disadvantages. Flannel is one such implementation, backed by iptables, and it is perhaps the most simple and popular in Kubernetes. Flannel is solely concerned with setting up routing between Pods in the Kubernetes cluster, which is achieved by creating an overlay network using the Virtual Extensible LAN (VXLAN) protocol.

Another project, Calico, can be run as either a standalone CNI solution or on top of Flannel (called Canal) to provide network policy enforcement.

Source: cilium.io